Doubts and Solutions

OR

CBSE XII Science - Chemistry

Asked by hannamaryphilip | 17 Apr, 2024, 11:20: PM

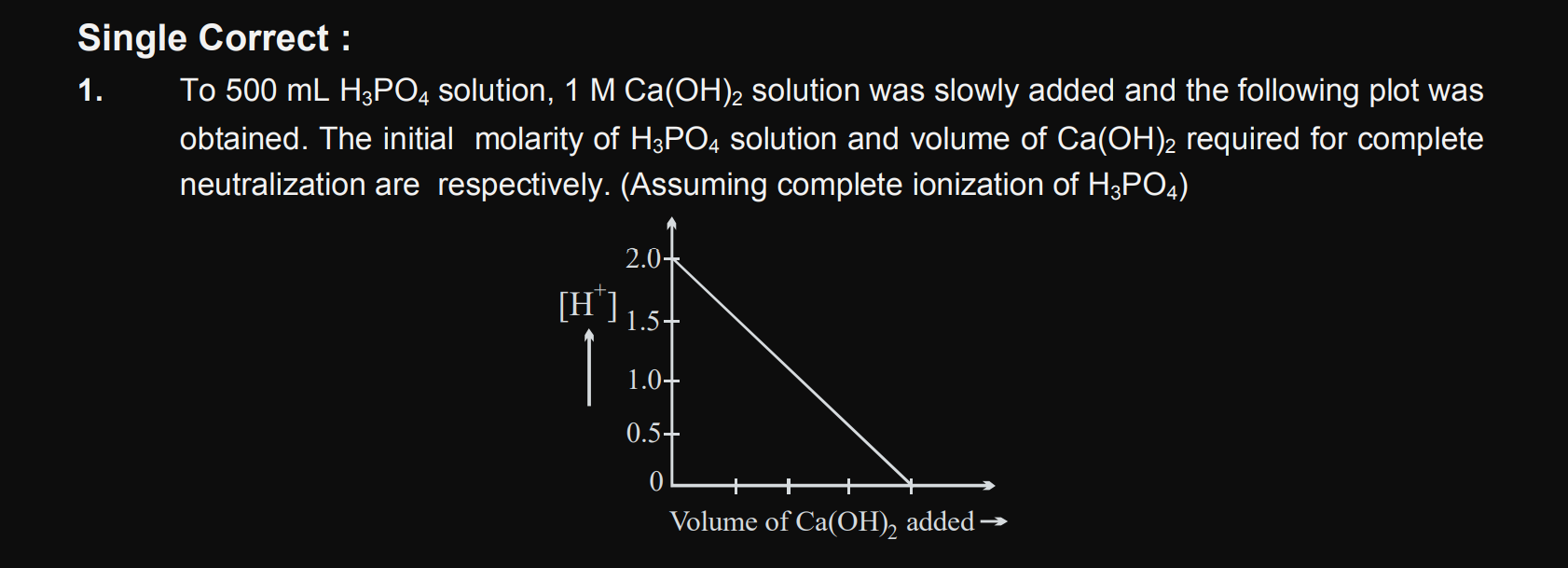

JEE Main - Chemistry

Asked by ashwinskrishna2006 | 17 Apr, 2024, 07:17: PM

NEET NEET - Biology

Asked by anandibastavade555 | 17 Apr, 2024, 07:08: PM

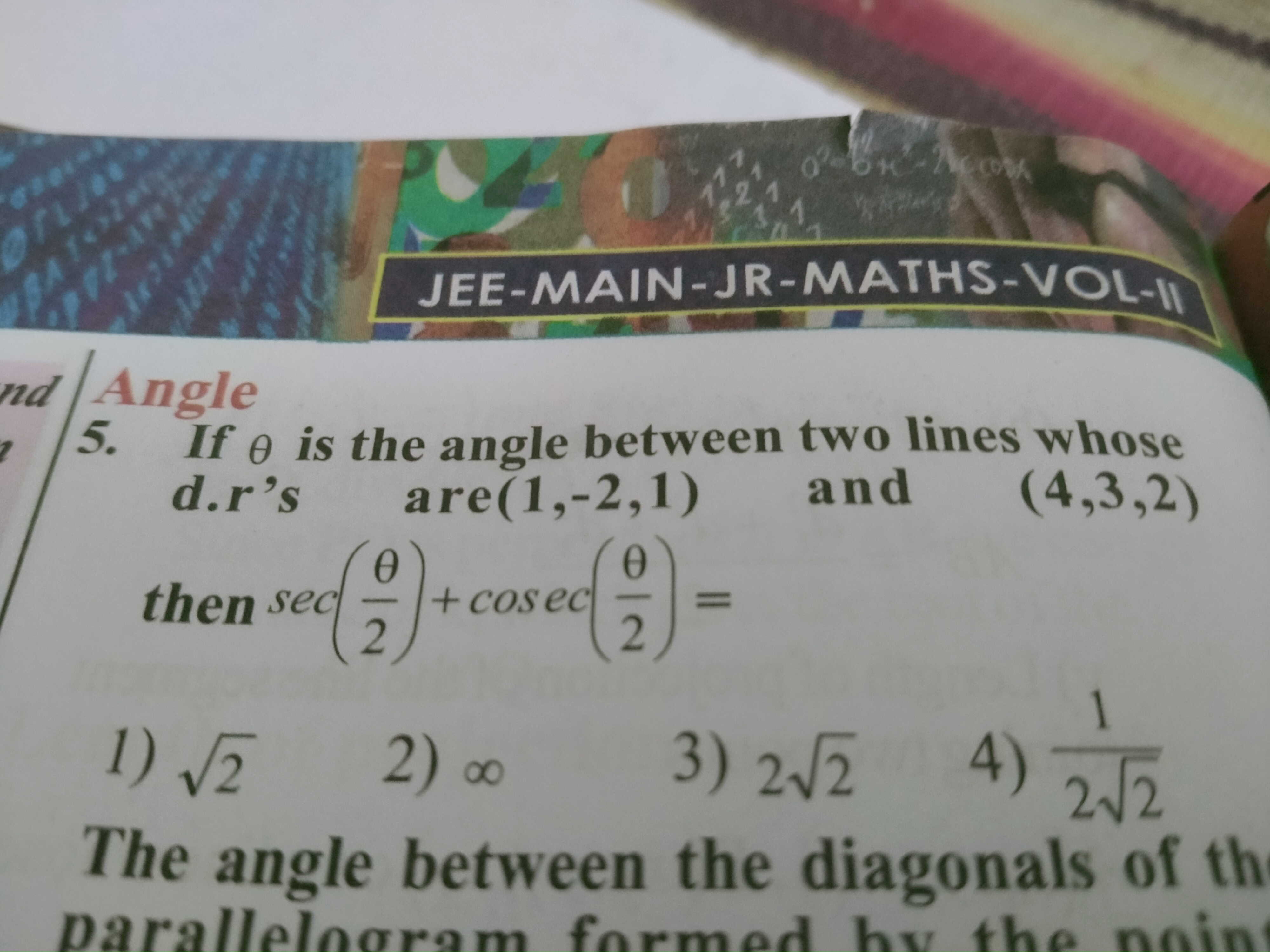

NEET NEET - Physics

Asked by gouranshi84 | 17 Apr, 2024, 05:23: PM

CBSE VII - Maths

Asked by shouryavijay0020012 | 17 Apr, 2024, 03:45: PM